Removing speed limits and securing connections with Autobahn

With the Internet serving as the new corporate network, providing low-latency, secure connections to any resource is paramount. It’s why we built our Secure Access Service Edge (SASE) module in the first place. A common challenge for SASE providers, however, is throughput. Most cap out at between 300 Mb/s to 500 Mb/s depending on networking conditions, architectures, and protocols used to connect.

Earlier this year, we challenged our engineering team to become a leader in SASE performance while improving security. Invigorated by the opportunity, they analyzed every aspect of SASE to identify ways to reach this goal. From their analysis, the team identified several critical initiatives that would deliver the best overall experience to end users while improving security.

The first that we recently launched was our new Web Proxy. The second is Project Autobahn, a new technology that increases throughput for connections from devices to the Secure Global NetworkTM (SGN) Cloud Platform. We're excited to announce that Project Autobahn is now live.

With Project Autobahn, we had three primary goals:

- Remove speed bottlenecks to achieve maximal throughput

- Ensure the scalability and stability of the architecture as throughput multiplies

- Strengthen security through data packet processing and extendibility improvements

Testing the prior implementation

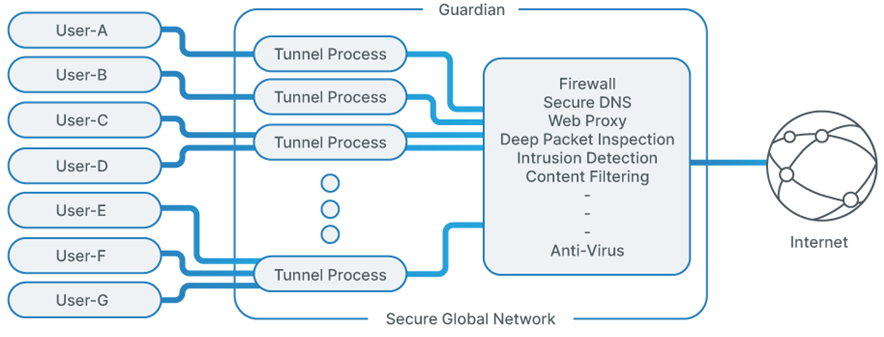

With our goals set, we needed to first understand how far we could push our prior technology that handles connections from devices to the SGN. This technology is a critical component, serving to create a secure tunnel between the user’s device and the SGN while providing three critical functions: authentication, encryption, and routing. Every single data packet protected by the SGN is processed here, making it a key part of the performance equation. We had to minimize latency and maximize throughput to achieve our goal.

Our SGN engineering team ran all manner of tests, pushing our prior implementation to its limits to see if we could increase speeds by at least 3x while lowering CPU utilization on the agent. We load tested different designs across different network conditions, simulating a broad spectrum of real-world scenarios to identify bottlenecks.

The results of the test highlighted opportunities for massive performance improvements, however, it required our teams to build a new technology from the ground up, coordinating across our various engineering teams and working with our data center partners to ensure success.

Back to the drawing board

With our initial goal set, we knew the project would require changes from top to bottom, including a new SGN Connect Agent, different API integrations with Todyl’s portal, new encryption protocols, different packet processing, and much more.

The first step to improving speeds was evaluating existing cryptographic libraries for Project Autobahn’s encryption/decryption process. We had to determine which library would allow for faster packet processing that limited the number of CPU instructions and memory accesses.

We evaluated seven popular cryptographic libraries, optimizing for the hardware architecture and accelerators available in the SGN. After testing all seven, we learned there is more than a 40% difference between the slowest and fastest encryption functions. Based on these results, we selected the fastest one in our test.

From there, we needed to optimize routing. Routing requires the copying/moving of packets, which typically is much more expensive than routing table lookups (longest prefix match). In fact, the processing costs can be higher than every other function performed, including encryption/decryption. We had to minimize these costs.

Packet copying/moving happens between kernel-space memory, user-space memory, and interfaces. One option to reduce copying is to place packet processing in the kernel. However, after several tests, we decided on user-space due to the other functions our SASE performs (e.g., intrusion detection and deep packet inspection). This allows Project Autobahn to provide the packet hook for these applications, resulting in overall faster speeds due to fewer overall packet copies than using a kernel hook like NFQUEUE.

Ensuring that high speed scales

With the speed limits shattered with our new SASE tunnel implementation, we needed to ensure those speeds would be maintained as more users connected to the SGN. Building something this fast would be meaningless if it couldn’t scale.

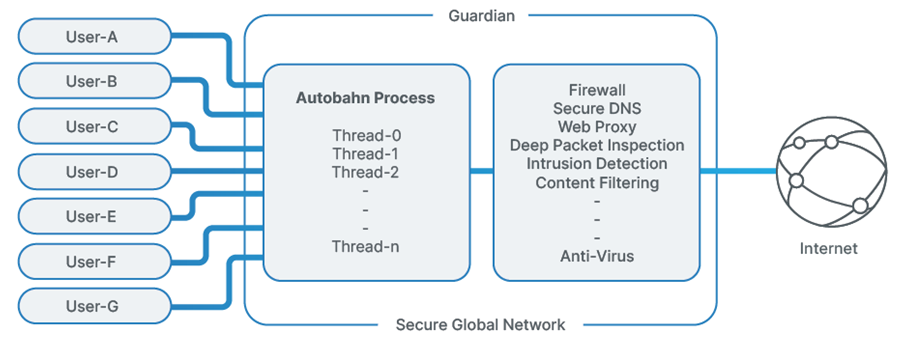

When designing Project Autobahn, the focus was writing an implementation that scaled within a node. Single-threaded solutions can quickly be fully consumed when many users connect. It requires complex engineering to work around the issue, including running multiple instances listening on different ports while load balancing across machine and VPN processes. The complexity of a single-threaded solution is costly, requiring engineering to manage the performance, configuration, logs, and load across many processes that makes it difficult to optimally scale within a node (see Figure 1).

Our engineering team knew the shortcomings of a single threaded implementation, so designed Project Autobahn to be multi-threaded from day-one. To ensure the application could scale, we needed to evenly distribute the work and minimize synchronization. For work distribution, we rely on the operating system. We built Project Autobahn to listen on a single port with the SO-REUSEPORT socket option. By doing this, packets are distributed to threads using a hash on the source IP address and port, resulting in a well-balanced load with minimal overhead (see Figure 2).

This design required us to think through ways to protect access to shared data. For it to efficiently scale, we had to reduce synchronization using locks, otherwise it results in serialization that must be minimized (Amdahl's law). To accomplish this, we strove to not share data with more than one thread, applying this technique liberally while writing Project Autobahn. However, there remained some data that had to be shared to support important application features. We solved by using lock-free algorithms to protect access while maximizing parallel execution. The result is an application that scales near linearly with the number of CPUs on the node.

Improving data packet processing and extendibility

Since Project Autobahn processes every packet that flows into the SGN, it provided a unique opportunity for packet orchestration. We wanted Project Autobahn to deliver more than VPN functionality. In many cases, VPN functionality is not easily extended and requires hooks in the OS to get access to packets. This is both inflexible and expensive in latency and throughput.

We built Project Autobahn to serve as both a VPN tunnel into the SGN and the orchestrator for packet processing. It’s also a critical system component, so we knew it needed to be simple and performant when designing it. Since adding packet processing functionality into Project Autobahn would contradict these goals, we designed it to schedule and coordinate packet processing with other independent processes performing specific functions, such as deep packet inspection (DPI). Again, efficiency in these processes is critical to speed, so we designed the packet buffers to be shared across processes and the packet are exchanged with lock-free single reader/writer in-memory FIFO queues. This design minimizes packet copying and communication overhead.

As the packet orchestrator, Project Autobahn provides more than just a performance boost for packet analysis. It also allows for flexible packet analysis based on policy. For example, a maximum time budget could be set for DPI and if the time is exceeded the packet is forwarded before a response from the system. Any future packets on the connection will be processed based on the response, but the current packet will be forwarded using the default policy. While it's a tradeoff between performance and 100% DPI policy adherence, the flexibility allows administrators to make that decision instead of the system designers.

Connectivity and security without speed limits

Project Autobahn is the culmination of months of hard work from our SGN engineering team to push the boundaries of speed and performance for a SASE solution. These improvements are just the start.

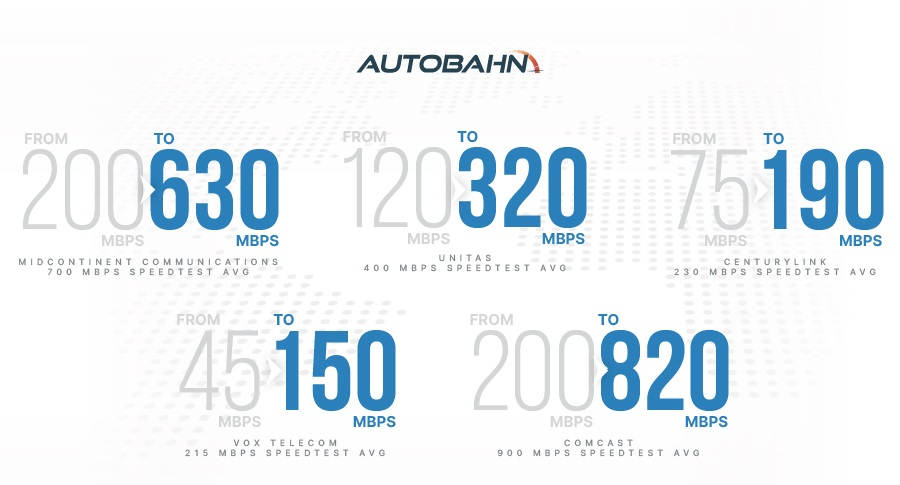

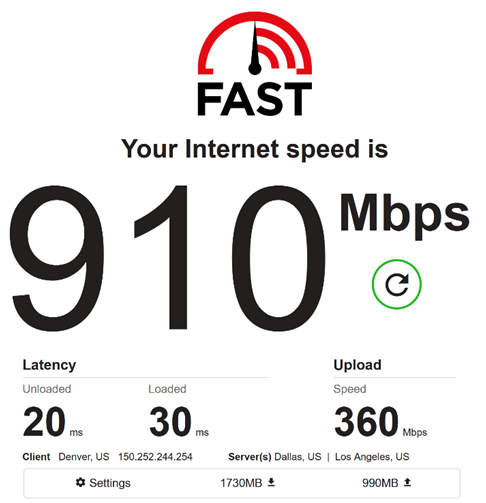

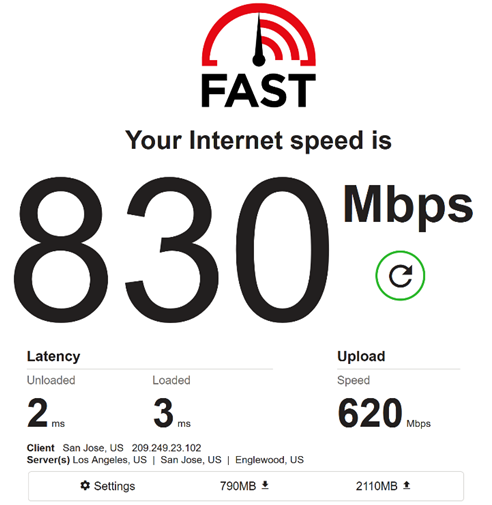

After completing the project, we brought in a leading, third-party penetration testing firm based in the UK. The report came back clean, even complementing us for the quality of code the team wrote. The results below demonstrate what our new implementation is capable of (Figure 3, 4, and 5).

We couldn’t be more excited to share what’s next. Stay tuned on our blog for additional details on engineering projects across the Todyl Platform.

Cybersecurity Readiness Assessment

Analyze your operational readiness and get instant assessment-driven insights to strengthen your security posture.

Stay on the Cutting Edge of Security

Subscribe to our newsletter to get our latest insights.