The Rising Threat of Malicious AI: What Every Organization Needs to Know

Artificial intelligence has transformed how we work, communicate, and solve problems. From streamlining business operations to enhancing customer experiences, AI's potential to benefit society is undeniable. However, this same transformative power has a dark side. Just as AI can automate beneficial tasks, it can also automate harm and adversaries around the globe are taking notice.

What is Malicious AI?

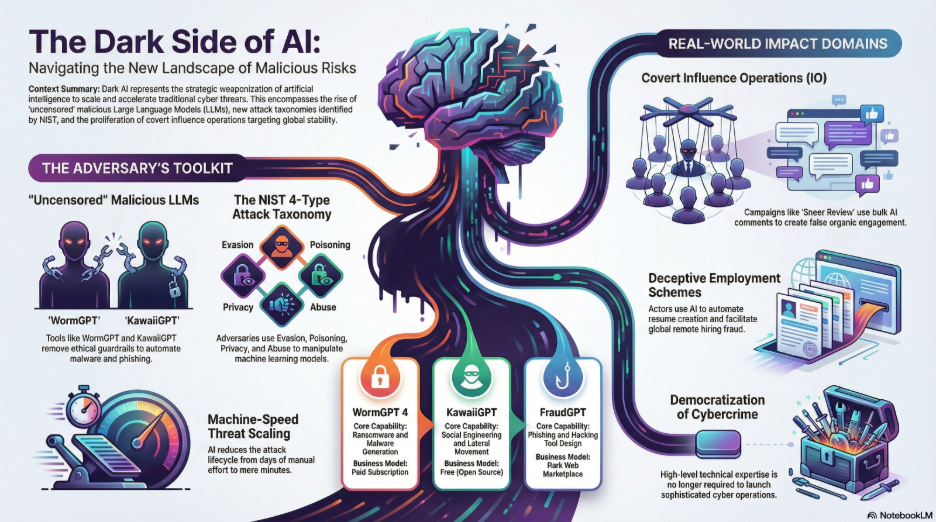

Although AI has enormous value in day-to-day usage for end users, systems, and workflows, malicious AI refers to artificial intelligence systems that are intentionally designed or repurposed to cause harm, deceive people, or exploit vulnerabilities. Instead of helping users, these systems automate or enhance harmful activities at unprecedented scale.

Modern threat actors are no longer limited by their technical skills or resources. They're leveraging AI tooling for sophisticated attacks including:

- Spear phishing and malware campaigns that adapt to their targets

- Post-exploitation activities and credential theft that happen in minutes rather than hours

- Generation of malicious scripts and code to extract or decrypt browser credentials and cookies

- Automated vulnerability discovery that outpaces human security researchers

- Polymorphic malware that changes its signature to evade detection

The democratization of AI technology means that what once required elite hacking skills can now be accomplished by relatively inexperienced attackers often called "script kiddies" using readily available AI tools.

The reach of malicious AI extends across every sector of society, with no one immune to its potential consequences.

- Individuals face personal risks including sophisticated scams, identity theft, and deepfake impersonation. Your voice, likeness, or personal information can be weaponized against you or used to target people you know.

- Organizations of all sizes confront automated cyberattacks, data breaches, business email compromise (BEC), and fraud schemes that can bypass traditional security measures. The financial and reputational damage from these attacks can be devastating, with some companies losing hundreds of thousands or even millions of dollars in single incidents.

- Governments and public institutions must defend against disinformation campaigns, election interference, and threats to critical infrastructure. These attacks can erode public trust, destabilize democratic processes, and compromise national security.

- Society as a whole faces the erosion of truth itself, as deepfakes and AI-generated misinformation make it increasingly difficult to distinguish authentic content from fabricated material.

When is Malicious AI Being Used?

The short answer: constantly.

Malicious AI operates both opportunistically and persistently. Cybercriminals exploit major events, crises, elections, and breaking news to maximize the impact of their campaigns. During moments of heightened emotion or urgency, people are more vulnerable to manipulation and AI allows attackers to capitalize on these windows at scale.

Beyond opportunistic attacks, malicious AI runs continuously in the background as threat actors seek efficiency and competitive advantage. The automation capabilities of AI mean that attacks can be launched 24/7 with minimal human oversight.

The frequency and sophistication of these attacks have increased dramatically as AI tools have become:

- Cheaper: Many malicious AI tools are free or low-cost

- Faster: Automated attacks execute in seconds or minutes

- More accessible: User-friendly interfaces require minimal technical knowledge

- More capable: Advanced language models can generate convincing, contextually appropriate content

How is Malicious AI Being Used?

Understanding the tactics employed by malicious actors is essential for mounting an effective defense.

- Content Generation: AI creates convincing phishing emails, text messages, and social media posts that are grammatically perfect and contextually appropriate, making them far more effective than traditional spam.

- Deepfake Creation: Synthetic audio and video content impersonates real people with startling accuracy, enabling fraud, extortion, and reputational attacks.

- Automated Hacking: AI systems identify vulnerabilities, craft exploits, and navigate systems faster than human defenders can respond.

- Information Warfare: AI spreads misinformation and propaganda at massive scale, creating fake personas and content that appears organic.

- Security Bypass: Machine learning models are trained specifically to evade detection systems, antivirus software, and fraud prevention tools.

- Data Analysis and Exploitation: AI rapidly processes stolen data to identify valuable information, credentials, and targets for further attacks.

By automating these tasks, attackers can operate faster, target more victims simultaneously, and reduce the technical expertise required to launch sophisticated campaigns.

Digital Security Threats

Malicious LLMs and Malware Automation

Purpose-built malicious models have emerged specifically to enable cybercrime. Two notable examples illustrate this disturbing trend:

- WormGPT: A malicious, unrestricted generative AI tool designed specifically for facilitating phishing attacks, malware development, and Business Email Compromise (BEC). Unlike legitimate AI assistants with ethical guardrails, WormGPT has no restrictions on generating harmful content.

- KawaiiGPT: Positioned as a free alternative to WormGPT with the same functionalities, making malicious AI capabilities accessible to an even broader audience of potential attackers.

These tools enable even low-skilled "script kiddies" to generate functional ransomware code, malicious scripts, and polymorphic malware that adapts to evade detection. The barrier to entry for cybercrime has never been lower.

Adversarial Machine Learning

Attackers don't just use AI they also attack AI systems themselves through several sophisticated techniques:

- Data Poisoning: Introducing corrupted or malicious data during the training phase of AI models, causing them to learn incorrect patterns or behaviors that benefit the attacker.

- Evasion Attacks: Altering inputs to trick deployed AI systems. For example, placing specific stickers on stop signs to confuse autonomous vehicle vision systems, or modifying malware to avoid detection by AI-powered security tools.

- Model Abuse: Inserting incorrect information into legitimate sources that AI systems rely on, effectively repurposing a model's intent to serve malicious goals.

Advanced Social Engineering

AI has revolutionized social engineering attacks through linguistic precision. Modern phishing and voice phishing ("vishing") campaigns feature:

- Hyper-realistic, grammatically perfect emails in multiple languages

- Audio deepfakes that convincingly mimic CEOs, trusted vendors, or family members

- Contextually aware messages that reference real projects, relationships, and organizational details

- Adaptive conversation capabilities that respond intelligently to victim questions

These AI-enhanced attacks bypass the traditional red flags that security awareness training teaches people to recognize.

Superhuman Hacking Capabilities

In specific domains, AI systems already significantly surpass human performance:

- Vulnerability identification: Scanning codebases and systems faster than any human team

- Automated exploitation: Crafting and testing exploits in rapid iteration

- Speech synthesis: Creating voice clones indistinguishable from authentic recordings

- Pattern recognition: Identifying security weaknesses in configurations and architectures

This "superhuman" capability means that defenders must also leverage AI tools just to maintain parity with attackers.

Political Security Threats: Weaponizing Information

Beyond financial cybercrime, malicious AI serves as a powerful tool for manipulating public opinion and enabling mass repression.

Covert Influence Operations (IO)

State-linked actors from China, Russia, Iran, and other nations use AI to generate bulk social media content and create networks of fake personas. These artificial accounts simulate organic engagement, amplifying propaganda narratives and drowning out authentic voices.

The scale of these operations is staggering thousands of coordinated fake accounts posting millions of pieces of content, all generated or managed by AI systems.

Deepfakes and Misinformation

Highly believable fake videos and synthetic media spread targeted propaganda and hyper-realistic misinformation on an unprecedented scale. When anyone can be made to appear to say anything, the concept of verifiable truth itself is under attack.

These campaigns don't just spread false information; they create an environment of confusion and doubt where people struggle to trust any source.

Surveillance and Privacy Elimination

AI enables the aggregation and analysis of citizen information at a level that facilitates privacy-eliminating surveillance and profiling. Governments and other entities can:

- Track individuals across multiple data sources

- Predict behaviors and identify dissidents

- Create detailed profiles of entire populations

- Monitor communications in real-time at massive scale

This radically shifts the power balance between individuals and institutions, with profound implications for privacy, freedom, and human rights.

Election Manipulation

"Bots" powered by AI are being used to manipulate everything from news agendas to election outcomes. These systems can:

- Amplify or suppress specific narratives

- Create the illusion of grassroots support or opposition

- Spread targeted disinformation to specific voter groups

- Interfere with the information environment during critical democratic processes

Real-World Examples: When Theory Becomes Reality

Understanding abstract threats is important, but examining actual incidents brings the danger into sharp focus.

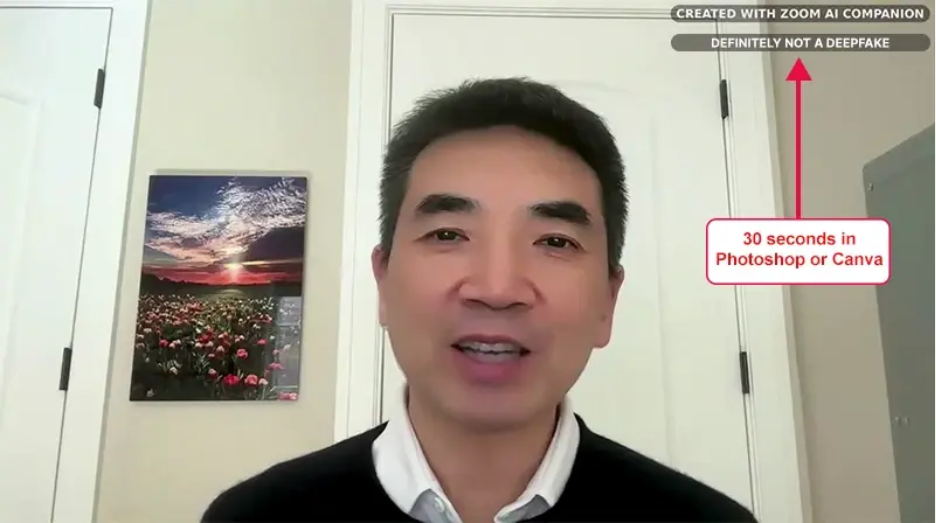

Deepfake Zoom Scam

In 2025, cybercriminals used AI deepfake technology to impersonate a company's executive during a Zoom meeting. The fake video and audio were so realistic that a finance director was convinced to transfer nearly $500,000 to the attackers before the fraud was discovered and stopped.

This case demonstrates how deepfake technology has matured beyond easily detectable fake videos to real-time impersonation capable of fooling even vigilant employees.

AI Voice Impersonation of Government Officials

An AI impostor mimicked U.S. politician Marco Rubio's voice and identity to contact government officials and diplomats via text and voice messages. The attacker attempted to extract sensitive information or gain unauthorized access by exploiting the trust placed in the official's identity.

This incident highlights the national security implications of voice cloning technology.

AI-Powered Social Engineering & Scams

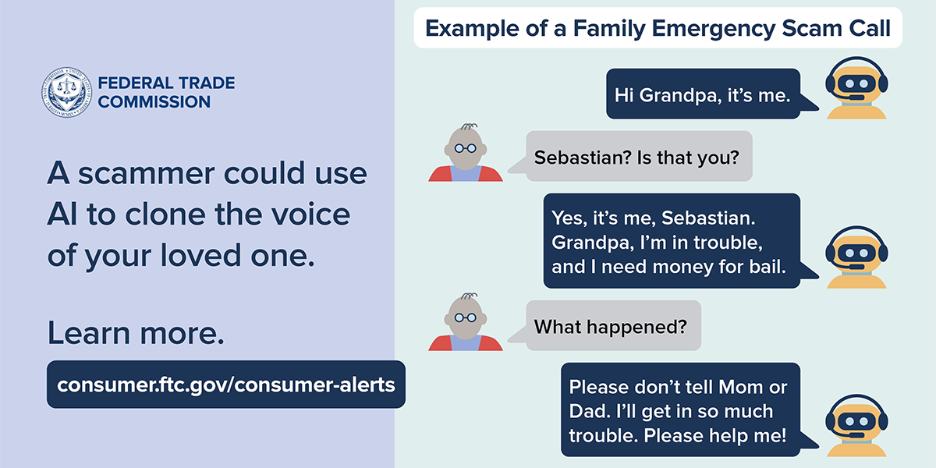

Voice Cloning Ransom and Relief Scams

Scammers have weaponized AI voice cloning to impersonate loved ones or colleagues, demanding ransom or financial help in emergency scenarios. These attacks exploit the emotional trust victims have in family members or close associates.

A typical scenario: You receive a frantic call from your child or parent claiming they've been in an accident and need money immediately. The voice sounds exactly right, down to familiar speech patterns. In reality, the scammer has cloned the voice using just a few seconds of audio from social media posts.

Celebrity Deepfake Fraud Ring

In Spain and other locations, organized fraud operations used AI-generated advertisements featuring trusted local figures and celebrities to trick over 200 global victims out of approximately $20 million in cryptocurrency investments.

The fake endorsements appeared on legitimate-looking websites and social media platforms, lending credibility to fraudulent investment schemes.

State-Level AI-Automated Cyberattacks

In 2025, Chinese state-linked hackers reportedly used advanced AI (specifically, Anthropic's Claude AI) to automate approximately 30 cyberattacks on corporate and government targets. The AI handled most attack steps with minimal human intervention, dramatically increasing the speed and scale of the operations.

This represents a watershed moment: state-sponsored threat actors successfully deploying AI to autonomously conduct sophisticated cyberattacks.

AI-Generated Malware and Phishing

Cybercriminals increasingly use AI to write adaptive malware and craft convincing phishing messages, boosting their ability to evade defenses and extract sensitive data. These AI-generated threats can:

- Adapt their behavior based on the environment they're in

- Generate unique variants to avoid signature-based detection

- Craft personalized phishing messages using information gathered from social media and data breaches

Browser Extension Data Theft

Two browser extensions branded as AI assistants were discovered exfiltrating user conversations with ChatGPT and DeepSeek, along with all Chrome tab URLs. This gave adversaries access to:

- Private conversations and potentially sensitive information shared with AI assistants

- URL parameters containing session tokens, user IDs, and authentication data

- Complete browsing history and activity patterns

The incident demonstrates how threat actors are exploiting the popularity of AI tools to distribute malicious software disguised as helpful utilities.

Why This Matters: The Bottom Line

The examples above aren't isolated incidents they represent a fundamental shift in the threat landscape. AI makes harmful actions faster, cheaper, and more scalable for cybercriminals, scammers, political manipulators, and other malicious actors.

The key takeaways:

- Democratization of sophisticated attacks: Advanced attack techniques once limited to elite hackers are now accessible to anyone with internet access.

- Speed and scale: AI enables attacks to be launched at a pace and volume that overwhelms traditional defenses.

- Erosion of trust: When deepfakes and AI-generated content are indistinguishable from authentic material, trust in digital communications breaks down.

- Financial impact: Individual incidents now regularly result in losses ranging from hundreds of thousands to millions of dollars.

- Societal consequences: Beyond financial costs, malicious AI threatens democratic processes, personal privacy, and social cohesion.

- Asymmetric advantage: Attackers can leverage AI to gain significant advantages over defenders who rely on traditional security approaches.

Steps for Defending Against Malicious AI

Understanding malicious AI is the first step toward defending against it. Organizations and individuals must:

Implement AI-powered defenses to counter AI-powered attacks

What to implement:

- AI-driven email security gateways that analyze email content, sender behavior, and linguistic patterns to detect AI-generated phishing attempts that traditional filters miss

- Behavioral analytics platforms using machine learning to establish baseline user and entity behavior, flagging anomalies that might indicate compromised accounts or insider threats

- Automated threat intelligence systems that continuously ingest data from global threat feeds, correlate indicators of compromise, and adapt defenses in real-time

- AI-enhanced endpoint detection and response (EDR) solutions that identify polymorphic malware and novel attack techniques by analyzing behavior rather than relying solely on signatures

- Network traffic analysis tools powered by machine learning to detect subtle patterns indicative of data exfiltration, lateral movement, or command-and-control communications

Enhance verification procedures for high-stakes decisions and transactions

What to implement:

- Multi-channel verification protocols requiring confirmation through at least two independent communication channels for high-stakes requests (e.g., if someone requests a wire transfer via email, verify via a phone call to a known number, then confirm again via an in-person conversation or authenticated messaging platform)

- Pre-established verification codes or passphrases that family members and key business contacts can use to confirm their identity during unexpected urgent requests

- Digital authentication workflows requiring multiple approvers for financial transactions above certain thresholds, with each approver using multi-factor authentication

- Time-delay policies for large or unusual transactions, creating a cooling-off period during which additional verification can occur and potential fraud can be caught

- Out-of-band confirmation systems where sensitive requests must be approved through a separate, dedicated secure channel rather than responding directly to the initial communication

Educate stakeholders about deepfakes, voice cloning, and AI-enhanced social engineering

What to cover:

- Deepfake awareness training showing real examples of deepfake audio and video to demonstrate how convincing these fakes can be, while teaching warning signs like unnatural lighting, unusual blinking patterns, or audio artifacts

- Social engineering tactics specific to AI including how attackers use AI to gather intelligence from social media, generate personalized phishing content, and create convincing pretexts

- Voice cloning threat education explaining how little audio is needed to clone a voice (sometimes just 3-5 seconds from a social media video) and practicing scenarios where employees must verify unexpected voice calls

- Safe AI tool usage guidelines for employees using generative AI tools, covering what information should never be entered into AI systems (proprietary data, customer information, credentials) and how to identify potentially malicious AI tools

- Browser extension and software vetting, training employees to scrutinize permissions requested by browser extensions, mobile apps, and software, especially those branded with popular AI names

Adopt zero-trust architectures that don't rely solely on identity verification

What to implement:

- Identity-centric security models where access decisions are based on continuous verification of user and device identity, not just initial login credentials

- Micro-segmentation dividing networks into small zones with separate access requirements, limiting lateral movement if attackers compromise one area

- Least-privilege access controls ensuring users and applications have only the minimum permissions necessary to perform their functions, regularly reviewing and revoking unnecessary access

- Continuous authentication mechanisms that monitor user behavior throughout sessions, not just at login, triggering re-authentication or blocking access if anomalies are detected

- Device trust verification assessing the security posture of devices before granting access to resources, ensuring they're patched, running endpoint protection, and not exhibiting signs of compromise

Monitor for indicators of AI-generated content and automated attacks

What to monitor:

- AI-generated content signatures including unusual linguistic patterns, repetitive phrasing structures, or content that seems too perfect (no typos, perfect grammar in contexts where that's unusual)

- Behavioral anomalies such as users accessing resources at unusual times, from unusual locations, or in patterns inconsistent with their role

- Volume-based indicators like sudden spikes in outbound email, unusual amounts of data being accessed or transferred, or rapid-fire authentication attempts

- Technical artifacts including evidence of automated tools, scripted behaviors, or bot-like activity patterns in logs

- Social engineering attempt patterns tracking increases in phishing attempts, vishing calls, or suspicious requests targeting specific departments or individuals

Develop incident response plans specifically addressing AI-enabled threats

What to plan for:

- Deepfake incident protocols detailing how to respond if your organization discovers that executive deepfakes are being used in fraud attempts, including crisis communication strategies and legal response options

- Credential compromise scenarios with clear procedures for when AI-assisted attacks successfully harvest credentials, including rapid password resets, session termination, and investigation of what the compromised account accessed

- Data exfiltration response addressing how to contain breaches, assess what data was stolen, notify affected parties, and prevent further loss when AI tools are used to rapidly extract and analyze data

- Reputation management procedures for when your organization's name, logos, or executive likenesses are used in AI-generated scams targeting customers or partners

- Business email compromise (BEC) playbooks specifically addressing AI-enhanced BEC attacks that use perfect grammar, legitimate-seeming email addresses, and convincing pretexts

The race between malicious AI and defensive AI is accelerating. Organizations that understand this evolving threat landscape and take proactive steps to defend themselves will be far better positioned to weather the coming challenges.

The question is no longer whether AI will be weaponized; it already has been. The question is: are you prepared?

Security Readiness Checkup

Analyze your operational readiness and get instant assessment-driven insights to strengthen your security posture.

Stay on the Cutting Edge of Security

Subscribe to our newsletter to get our latest insights.